A brief history of BOINC

|

random trip report |

David P. Anderson

26 Jan 2022; latest update Apr 2026

Thanks to Mike McLennan and others for edits.

Introduction

BOINC is a software system for "volunteer computing": it lets people donate time on their home computers and smartphones to science research projects. It has been used by about 50 projects in many areas of science, and has run on millions of computers.

Computing has emerged as a central tool in every area of science. BOINC sought to expand this tool by orders of magnitude, to involve the worldwide public directly in science, and to enable breakthrough science. It succeeded to some extent; BOINC-based computing contributed to over 1000 research papers. Hence I think that volunteer computing - and BOINC in particular - is an important chapter in the history of science.

This essay tells the story of BOINC from my perspective. It describes how things unfolded, and offers some theories about why things happened as they did. The story is partly about technology but mostly about people: personalities, aspirations, achievements, and conflicts. If anyone feels slighted, I apologize. If there's an omission or factual error, please let me know.

The origins of BOINC

David Gedye and SETI@home

I taught in the UC Berkeley Computer Science Dept. from 1985 to 1992. David Gedye was a graduate student there, and was a Teaching Assistant in the Operating Systems class I taught. We became running partners, and have been fast friends (ha ha) ever since.

Gedye conceived of the idea of volunteer computing, and he shared it with me in early 1995. Together with Woody Sullivan and Dan Werthimer we formed SETI@home, with the goal of using volunteer computing to analyze radio telescope data, looking for "technosignatures": synthetic signals coming from space.

Dan, Woody, me, Dave Gedye at 10th SETI@home anniversary in 2009

But from the beginning, Gedye and I thought of volunteer computing as being of potential use in all areas of science - physics, Earth Science, biomedicine, and so on. We talked of forming an organization called "Big Science" that would coordinate volunteer computing, and we briefly owned the domain 'bigscience.com'.

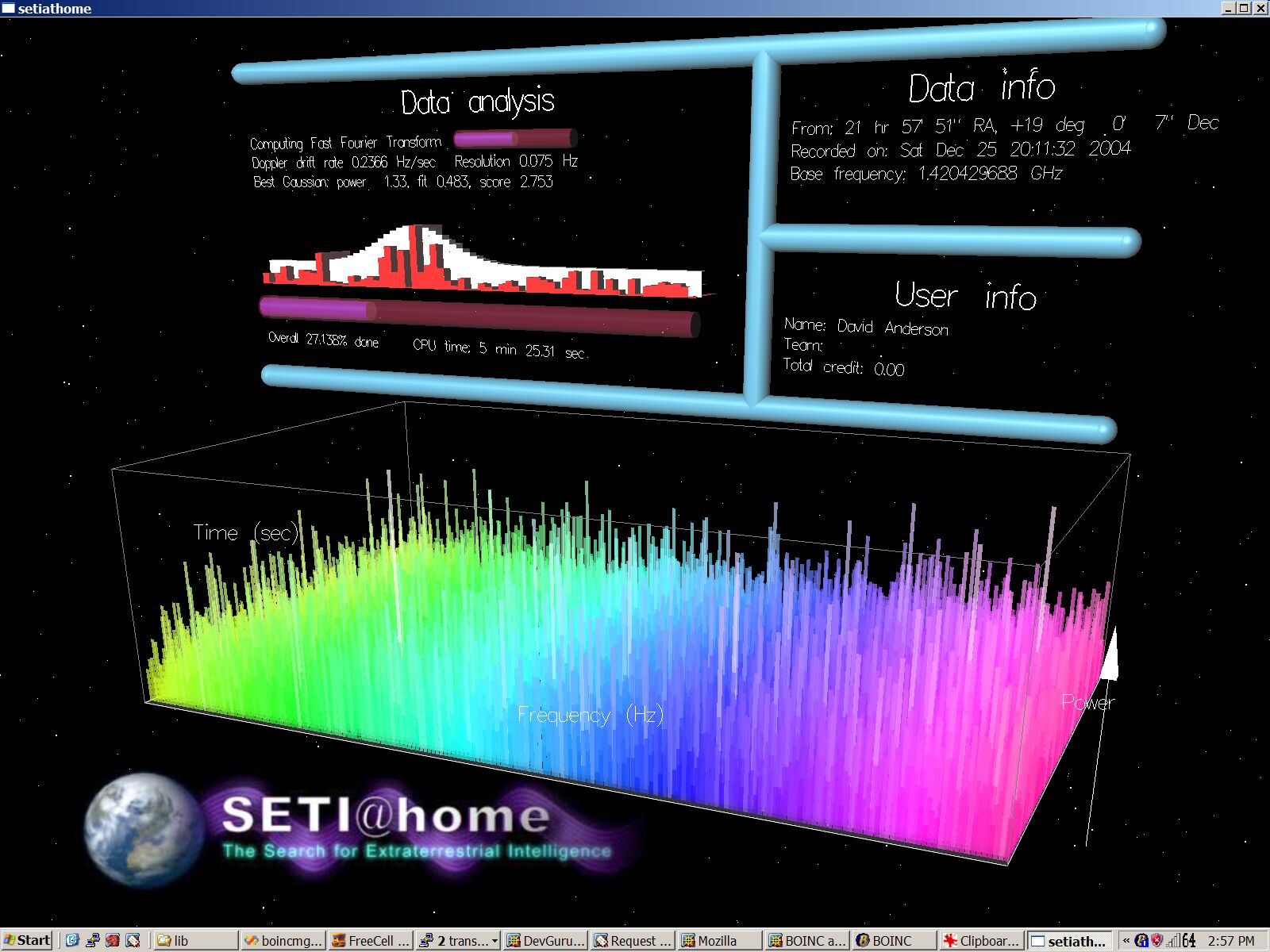

Two years passed. SETI@home eventually got some funding and we hired a few programmers. Gedye dropped out of the project. Eric Korpela joined and wrote the science code. I wrote most of the rest, including the screensaver graphics. In 1998 we got the whole thing working, and we launched in May 1999. It was a huge success. For about a year we got tons of mass-media publicity, and the number of volunteers soared to over a million. At one point we were getting over 1000 years of computer time per day - mind-boggling.

Initially, SETI@home was a single program, which included both the infrastructure part (network communication, fetching and returning jobs, working invisibly in the background) and the science part (the data analysis). Each time we changed the science part, all the volunteers had to download and install a new version of the program.

This was unsustainable; we needed to be able to change the science code frequently, with no user involvement. This meant separating the infrastructure from the science, Also, we found ourselves spending too much time on infrastructure issues (notably DB and server sysadmin) and not enough on science. I starting thinking about creating a general-purpose infrastructure for volunteer computing, as in the Big Science model.

United Devices

In 2000 I was approached by a startup company, United Devices (UD), that wanted to develop such an infrastructure and monetize it. They proposed letting SETI@home use it for free (the details were never nailed down). They wanted to hire me as CTO, and I needed a job. My SETI@home colleagues were (justifiably) skeptical about this, but I managed to talk them into it. For about a year I worked for UD, commuting between Berkeley and Austin TX, and I developed an infrastructure for them. Then things went sour - UD hired some people who didn't like my approach and wanted to start over. So in 2001 I quit UD, and started working on BOINC - another volunteer computing infrastructure, this time open source, with no profit motive.

The genesis of BOINC

I wrote the central part of BOINC - client, server, Web code - in a few months in late 2001 and early 2002, on a Linux laptop, mostly working in a cafe called The Beanery in Berkeley (it's no longer there). I wrote the client and server in C++ and the web code in PHP.

In March 2002 United Devices filed a lawsuit against me, claiming that I had stolen their trade secrets and demanding that I stop working on BOINC. A policeman came up to Space Sciences Lab and dropped a thick stack of papers on my desk. This scared me for a few seconds, but when I looked at the list of supposed "trade secrets", I burst out laughing. Example: "an interface to shared memory that involves create, attach, detach, and destroy operations" [note: that's like claiming that tying your shoe is a trade secret]. I contacted the UC lawyers, and they arranged a meeting with UD, at which I pointed out that the UD claims were bogus and in fact UD was using ideas developed at UCB for SETI@home. The lawsuit was quickly withdrawn.

Ownership and license

I didn't aspire to do a startup and get rich from BOINC. So I decided that:

- All BOINC code - by me and by outside volunteers - would be owned by the University of California.

- The code would be open source, distributed under the LGPL license. This later caused friction with several companies that wanted to use BOINC, but mostly because their lawyers didn't know the difference between LGPL and GPL (which are very different).

The name and logo

Academic software projects tend to adopt ponderous names, often taken from Greek mythology: Hydra, Medusa, and so on. I wanted a less imposing name - something light, catchy, and maybe - like "Unix" - a little risqué. I played around with various acronyms and settled on "BOINC" - Berkeley Open Infrastructure for Network Computing. I thought of this as the sound of a drop of water falling into a pool. Maybe I should have picked a less silly name, but I don't think it would have made much difference.

I made the first BOINC logo in 5 minutes using Microsoft Word. I wanted it to resemble a comic-strip sound effect.

Later, I wanted BOINC to be taken more seriously, and I asked volunteers for new logos. I got lots of submissions. One of them - by Michal Krakowiak - was perfect, and I used it.

Eventually, I wanted a name for the entire paradigm - not just BOINC, but other projects that had emerged: Folding@home, GIMPS, and distributed.net. People were incorrectly calling it "distributed computing" or "peer-to-peer computing". IBM World Community Grid unfortunately used "Grid" in their name, which created a confusion with Grid computing.

I originally called it "public-resource computing", but this was clunky. Around 2005 I proposed "volunteer computing". People generally liked this (although Michela Taufer pointed out that "VC" sounds like "WC"). I adopted it, and used it everywhere, and tried to get other people to use it. Not many did, though I've seen it a few times in recent paper titles.

My vision

Let me describe my vision for volunteer computing - what it could and should be. This has several dimensions.

First, volunteer computing should enable new scientific research: projects that - like SETI@home - need more computing power than is available from existing sources, or that can't afford to buy it from those sources. It should also support existing research. All computational scientists - thousands of them, or tens of thousands - should benefit from volunteer computing.

An aside: some computational science needs supercomputers that can finish single jobs fast. But most of it - things like simulating of chaotic systems and analyzing big data streams - involves lots of independent jobs. This is called "high-throughput computing" (HTC) because what matters is not how long it takes to finish each job, but the rate at which jobs are finished. Volunteer computing can handle HTC applications as long as their RAM requirements and file sizes are appropriate for home computers - and this is usually the case.

Conventional sources of HTC are clusters (at universities, research labs, and government-funded computing centers like TACC), grids (sharing of clusters between universities) and commercial clouds like Amazon Web Services. These typically cost scientists (or funding agencies) a lot of money. Also, these sources typically have at most on the order of 1 million CPUs, while volunteer computing has the potential of using a significant fraction of all the computers in the world: maybe 10 billion CPUs, and almost that many GPUs.

So volunteer computing can potentially provide thousands of times more computing power than conventional sources, enabling otherwise infeasible research. Furthermore, this research can be done by scientists with little or no funding, either because they're from poor countries, or because their research is unconventional and not favored by funding agencies.

Second - and it took me a while to realize this - volunteer computing can change volunteers, i.e. the world-wide general public. Volunteers can choose what research to support with their computers. In the course of making this decision (I hope) they learn about current scientific research, and about science in general. They think about the relative merit of areas and projects. They learn to think skeptically and rationally, and in large scales of time and space. They think of themselves as members of the human species - and of the Earth ecosystem - rather than as members of races, political parties, nations, and religions.

Along these lines, volunteer computing can democratize science funding, at least as far as computing is concerned. Decisions about the relative importance of different research areas and projects are made by the public, rather than by (possibly inbred or corrupt) government agencies. Given current trends I'm not sure this is actually a good idea, but at the time I was inspired by the Iowa Election Markets, which successfully used "collective wisdom" to predict election results and other things.

Finally, I wanted volunteer computing to be recognized in the academic and industrial worlds as a key paradigm for HTC, alongside cluster, cloud, and grid computing. There should be academic conferences and journals about it; computer scientists should do research on it and write PhD theses. Funding agencies should include it in their calls for proposals.

That's my vision. It's wonderful; I've pursued it doggedly, year after year, even as the pursuit became increasingly quixotic. However, my attempts to get other people to buy into the vision have been curiously unsuccessful. If someone revives the vision in some form, I'll be eager to help.

I also had some personal goals:

- My world view is deeply anti-establishment. I root for the underdog. Like Jack Black's character in "School of Rock", I want to Stick it to the Man. So the idea of volunteer computing - which shifts power away from corporations and the academic establishment and puts it in the hands of the people - resonated with me.

- Although in general I don't care about personal fame or fortune, I wanted recognition in the academic world (in part to compensate for having been denied tenure at UC Berkeley).

- I like science; I like working with scientists, learning about their research, and writing software to help them. I really enjoyed this aspect of SETI@home. I imagined that BOINC would involve me in science projects all over the world, and that I'd spend time traveling and working with them.

Funding and the NSF

By summer 2002, BOINC was a real thing. Someone suggested that I apply for a grant from the National Science Foundation (NSF) to pay for BOINC development, and I did. To my surprise, the proposal was funded. The program director, Dr. Mari Maeda, took a big chance on BOINC, and was extremely supportive. I'm forever grateful to her.

I got a position at UC Berkeley called "Research Scientist" - a job where you pay your salary out of grants. The university takes about 40% of your grants as "overhead" (of course, the association with the university is important for getting grants).

In the ensuing years, I wrote a series of NSF grant proposals, and many of them were funded. Mari Maeda left NSF, and Kevin Thompson became my program director. He was supportive, but a bit skeptical about volunteer computing.

Over the years, NSF put a lot of money into BOINC, and I think they viewed it as a success story. But I don't think anyone there took volunteer computing seriously as a paradigm for HTC. It seemed to me that NSF could save millions of dollars by using volunteer computing instead of building computing centers, and that NSF should encourage this in some way. But they didn't. Every few years NSF publishes a "road map" on the future of U.S. scientific computing. One of these (~2008) had a brief mention of volunteer computing, but later ones did not.

NSF has a PR department; they put out newsletters and maintain a web site. At one point I contacted them, and proposed that they publicize BOINC - after all, it was supplying computing power to several NSF-funded science projects, and attracting volunteers would benefit everyone. I also suggested the idea of creating public-service ads (print, TV, radio) through the Ad Council. There was initial interest in both ideas. But when I met with the NSF PR people in Washington, this interest had vanished. It was clear that the idea had been vetoed by someone higher up. I had an awkward lunch with the PR people; they kept shifting the conversation away from BOINC.

Not all my NSF proposals were funded, and there were times when I ran out of money. Various members of the BOINC community came forward and made generous donations to bridge the gaps: IBM, Mark McAndrew of Charity Engine, Bruce Allen of Einstein@home (on several occasions), and Anbince (see below).

The BOINC Project

My NSF grants provided enough money to not only pay my salary, but to hire some help, namely Rom Walton and Charlie Fenton. Rom was working at Microsoft and had already done some work for BOINC as a volunteer. Charlie Fenton, an expert Mac programmer, had worked on SETI@home. I was extremely lucky to find Rom and Charlie. They're both extremely smart, creative, tenacious, and dedicated. They ended up working on BOINC for almost 15 years. They worked remotely, even though Charlie lives nearby. It has been an extreme pleasure to work with them.

We didn't always see eye to eye, especially Rom and me. We often had different approaches to problems, and we spent many hours in (sometimes heated) discussions on the phone. But we pretty much always converged, hammering out solutions we were all happy with, and that were better than our original ideas.

Rom and I have different programming styles - my code tends to be much shorter than his. I put him in charge of three big chunks of code: the BOINC Manager, the Windows installer (using Installshield), and the VirtualBox wrapper. I let him implement them more or less as he liked, and I didn't micromanage his code. This worked out well. He did tons of other stuff, like setting up Trac, a software management system we used before moving to Github.

Charlie did everything Mac-related, such as the installer. The "sandbox" aspect of this - creating users and groups to run apps more securely - was particularly intricate. Because Mac is Unix-based, he branched out into the Linux version as well. He developed the GPU detection part of the client, which is tricky because it has to run in a separate process because a) GPU drivers are crash-prone, and b) it reduces battery drain on Mac laptops with separate low- and high-power GPUs (if a process uses the high-power GPU, the OS keeps it on until the process exits).

To me, Rom and Charlie are computer super-heroes, each with his own super-powers. Rom is extremely good at figuring out complex technologies, like Installshield, VirtualBox, and WxWidgets. Charlie has an astounding ability to develop big chunks of software that are 100% bug-free the very first time. I lack both of these skills, but I'm good at lateral thinking and at architecting big software systems. So we made a good team.

For a couple of summers, I hired UCB Computer Science undergrads to work on BOINC. One of them - Karl Chen - worked out extremely well. He was a Python devotee, and he developed a number of key Python scripts: to create a BOINC project, to start/stop projects, and so on. Actually, he wanted to rewrite all of BOINC in Python. In retrospect that might not have been a bad idea, although at that point Python's OS interfaces weren't as complete as they are today.

Some early students contributed to the server code (Michael Gary, Barry Luong, Hamid Aghdaee) and to the client (Eric Heien, Seth Teller).

Later, students worked on the PHP web code. That didn't work out so well - it typically took half the summer for them to come up to speed. The code they wrote tended to be funky, and I had to replace most of it. But it was fun having them around.

I also tried hiring other professional programmers, but I was terrible at interviewing and evaluating them; I made some bad hires and then had to let them go quickly. This happened three times. There was a Russian woman who impressed the hell out of me in her interview, and I hired her. But when she showed up for work, she couldn't write "Hello World" in C. The university doesn't like to fire people, because of the possibility of wrongful-termination lawsuits. They sent an HR person up from campus to lecture me.

Matt Lebofsky and Jeff Cobb didn't work directly on BOINC, but they did the sysadmin on the BOINC server, they helped move SETI@home to BOINC, and they helped in myriad other ways. We shared a big office at the Space Sciences Lab, had lunch together most days, and frequently took breaks in the late afternoon, during which we'd discuss music and mountain climbing, which we were all into. It was really great to work with them.

Jeff Cobb, me, and Matt Lebofsky in the Space Sciences Lab office

Getting off the ground

The next order of business was moving SETI@home onto BOINC. I worked with Eric Korpela to split out the SETI@home client into a self-contained application. Back then, screensavers mattered. In fact, some people called SETI@home "screensaver computing". I rewrote the SETI@home graphics using OpenGL, which allowed me to make it fancy and 3-D (it was, of course, inspired by Star Trek Next Generation).

When we released the BOINC-based version of SETI@home to the public, there was a lot of backlash. People don't like change in general, and they didn't like the complexity of BOINC. We lost a big fraction of our volunteer base; it went from ~600K to ~300K.

I was very eager to get Climateprediction.net (CPDN) working. It had very long jobs: 6 months on some computers. We added "trickle" mechanisms to let the jobs upload intermediate results, and to grant partial credit. I went to Oxford and spent a month working with Carl Christensen and Tolu Aina.

Bruce Allen called me up and proposed a project involving gravitational wave detection; this became Einstein@home.

Finally, I was contacted by IBM about moving their World Community Grid project (which originally ran on United Devices - I believe the only project to do so) to BOINC. There was a lot of concern about security, and IBM had their internal security experts evaluate BOINC; they found some vulnerabilities, and I fixed them.

But none of these projects was first out of the gate: that was Predictor@home, developed by Michela Taufer at the Scripps Institute.

So things got off to a great start, at least as far as having a lot of science projects, with a lot of energetic collaborators and a lot of technical challenges. However, I couldn't help noticing that there was no corresponding flurry of PR, as there had been for SETI@home in its first year. This was a bad omen.

People

BOINC attracted a really great group of people. Although I had conflicts with some of them, I liked pretty much all of them on a personal level. They hosted me in their home towns, I hosted them in Berkeley, we co-authored papers, I taught them to rock climb; we drank beer and became friends.

Francois Grey

The list needs to start with Francois; he was the biggest champion of BOINC, and its best spokesperson. Francois is originally a physicist. In ~2005 he was working at CERN, not as a scientist but as IT communication director. He loved the idea of BOINC, and invited me to CERN to give a talk and meet with people.

Francois is a masterful politician, in the best sense of the word. He unites people around causes. He figures out peoples' goals and motivations, and nudges them in ways that align them with other people. In this way he catalyzes activity. I've learned a lot from him, but I lack his innate people skills.

Francois and me. We're not actually arguing.

Once BOINC was established, Francois wanted to spread it around the world. He was especially excited about the idea of using BOINC to provide computing power to scientists in areas - Africa in particular - that didn't have much science funding or computing resources.

He organized tours by key BOINC people - me, Nicolas Maire, Carl Christensen, Ben Segal, and others - to various countries, visiting research labs and universities, trying to generate interest in volunteer computing. One of these was in Brazil, with stops in Brasilia, Rio, and Sao Paolo. Another was in Asia, visiting Academia Sinica in Taiwan and the Chinese Academy of Science (CAS) in Beijing. There was one in South Africa that I wasn't able to attend.

Francois gave a TEDx talk on BOINC, which is way better than any talk I ever gave. He was the driving force behind several of the BOINC workshops.

He spent a couple of years in Beijing leading up to the 2008 Olympics (his wife was working for the World Health Organization and was stationed there) and he convinced CAS to start a BOINC project, CAS@home (see below). We co-authored a paper about it.

Francois organized and catalyzed a lot of BOINC-related activity. Unfortunately, the activity didn't gain as much traction as it might have. Nothing happened in Brazil or South Africa after our trips there. CAS wasn't really committed to CAS@home, and it fizzled out. Eventually Francois had to find other things to focus on.

Michela Taufer

Michela Taufer is a computer scientist and computational biologist. She created Predictor@home, the first BOINC project, on her own. That must have been a tremendous amount of work. She later moved to the University of Delaware and started Docking@home.

Molecular simulations are numerically unstable, which means that different computers can compute widely differing but equally correct results. This makes it hard to validate results by comparing them. Michela came up with the "homogeneous platform" mechanism for dealing with this. We co-authored a number of papers.

Michela was interested in the various scheduling policies in BOINC, and in the use of simulation to study these policies. She had a PhD student, Trilce Estrada, implement a system that could simulate a BOINC project and a volunteer population. It was actually an emulator - it used the real BOINC server code, not an approximation of it. I worked with them to factor the server code in a way that made this possible.

At some point Michela realized that working on BOINC wasn't going to lead to tenure, and she turned to other things. She's had a successful career, and was chairperson of the Supercomputer conference for several years.

Myles Allen, Climateprediction.net, and Oxford

Myles is a visionary climate scientist at Oxford University. He proposed using volunteer computing for climate research in a Nature article in 2000. I read this and immediately contacted him. They had done something remarkable: taking a state-of-the-art climate model - a giant FORTRAN program that had only been run on supercomputers - and getting it to run on Windows PCs. They initially hired a local company to develop the job-distribution software, but switched to BOINC as soon as it was available.

I was very eager to make CPDN a success. In 2005 I spent a month in Oxford, staying in Myles' house (he was away for the summer) and working with Carl and Tolu.

CPDN accomplished a lot but didn't fully live up to its potential. Carl didn't feel appreciated at CPDN, and he left in 2008. Tolu left a year or two later. That left CPDN without a lot of technical resources. Oxford appointed a "director of volunteer computing", but nothing came of it.

Bruce Allen and Einstein@home

Bruce is a world-famous physicist (he studied with Stephen Hawking) who also loves to play with computer hardware and software. In 2005 he approached me to discuss using BOINC to look for continuous gravitational waves; this became Einstein@home.

Bruce become very interested in BOINC, especially the part of the server that deals with job failures and timeouts. He encouraged me to formalize this as a finite-state machine. He also designed and implemented "locality scheduling", which tries to send jobs that use particular input files to computers that already have those files (Einstein@home has big input files, each of which is used by many jobs).

Bruce and me, 2005

We co-authored a paper and he graciously included me on many of the Einstein@home papers.

Bruce hired lots of smart people, many of whom contributed to BOINC. Reinhard Prix wrote the initial Unix build system. Bernd Machenschalk and Oliver Bock made a number of contributions; Oliver oversaw the transition from Subversion to Git (retaining the version history was hard). I visited Bruce a number of times, including a month in Potsdam.

At one point I suggested that BOINC's web code (login, message boards) could use Drupal, which would subsume some of its functions. Bruce liked this idea and had Oliver pursue it; they later hired Matt Blumberg and Tristan Olive to do some of the work. This took years to complete, and only Einstein@home used it. BOINC's web code evolved to a point where it surpassed Drupal for the purposes of BOINC projects.

Later, I visited Hanover to discuss replacing the locality scheduling code (which was E@h-specific) with something more general. This didn't get off the ground; Oliver's formal approach to software design didn't mesh with my more holistic, brainstorming-based methods.

Kevin Reed and World Community Grid (WCG)

Kevin was the main technical person at WCG for its entire life, except for one year.

He found and fixed many bugs in BOINC, in all parts of the code, and contributed many enhancements, especially in server-side scheduling; WCG presented lots of challenges in this area because of the diversity of their applications. Kevin might be the only person besides me who understands how everything in BOINC works and fits together. He proposed the idea of "matchmaker scheduling": making jobs in a wide range of sizes, and automatically sending small jobs to slower devices. (We implemented this, but it didn't get used).

Kevin was also very active in the BOINC community. He helped organize and run workshops, and he chaired the Project Management Committee for several years.

Because of Kevin's imagination, breadth of knowledge, and ability to explain things clearly, I always thought he should get a PhD and go into academics. He and I co-authored a paper called "Celebrating Diversity in Volunteer Computing".

Me and Kevin, 2017

Speaking of WCG, I should also mention Bill Bovermann, who was the manager of the project until he retired and Juan Hindo took over. Bill's role was more organizational than technical. He's a great guy and we hit it off. He visited me in Berkeley once or twice.

Carl Christensen

Carl, together with Tolu Aina, was the main technical person at CPDN in its early days. He later was the technical lead at Quake Catcher Network. Carl and I worked together a lot. He added the Curl HTTP library, which was huge; prior to that we had our own HTTP code, and it was a big headache.

Carl (like me) had various chips on his shoulder. He wanted recognition for his (often superhuman) efforts - for example, to be included as an author on papers. When this didn't happen, it irked him.

I recruited him to work for the BOINC project, and Bruce Allen recruited him to work for Einstein. In both cases he agreed, and we did lots of paperwork, but he backed out at the last minute.

I thought that Carl - like Kevin Reed - had academic potential, and we co-authored a paper.

John McLeod VII

John was active in BOINC software development from 2005 to 2009 or so. He worked a lot on - and contributed key ideas to - the client's job scheduling and work fetch policies. He's a brilliant guy, and was the only person besides me who understood (or was interested in) the gory details of client-side scheduling. We co-authored a couple of papers.

INRIA: Derrick Kondo and Arnaud Legrand

Derrick was a computer scientist who was working as a research scientist at INRIA, the French CS institute, with Arnaud Legrand. They were interested in various aspects of volunteer computing, such as characterizing the volunteer host population and studying the relative economics of volunteer computing, Cloud and other paradigms. We wrote about a dozen papers together, and they hosted two BOINC workshops at INRIA in Grenoble.

Another member of this group was Eric Heien, who had been an undergrad at UCB and worked on SETI@home. We co-authored a couple of papers on fast batch completion and host characterization.

Matt Blumberg

Matt contacted me in 2004 to discuss BOINC usability issues. He had lost a relative to cancer, and was gung-ho about the potential of BOINC for medical research.

Matt (and I) thought that the process for browsing and attaching to projects was cumbersome. He wanted to make a web site that shows all the projects in one place, and lets you attach to projects just by checking boxes.

To implement this, we needed to add a new mechanism to BOINC, in which the client periodically issues an RPC to an "account manager", and gets back a list of projects to attach to. Matt used this mechanism to make the web site he had envisioned, which he called "Grid Republic". The mechanism turned out to have other uses.

Matt had a contact in Intel, and he got them to create something called Progress Thru Processors, to promote BOINC and the role of Intel CPUs in it. This was done through Facebook - you could sign up, download BOINC, and see your stats without leaving FB. This seemed like a great idea, but it didn't gain traction.

Later, Matt got involved in Charity Engine, and was also on the Project Management Committee (see below).

U. of Houston: Jaspal Subhlok and Marc Garbey

Various people had the idea of using BOINC for MPI-style parallel computing. This is a nice idea but has myriad problems: home computers have wildly different speeds, they can fail randomly, etc. Jaspal - from the University of Houston - came up with a design that addressed these problems. For several years he ran a research project - Volpex - that implemented this design and used it (in a limited environment) for an actual MPI-type app. A PhD thesis came out of this (I was on the committee) and we wrote a few papers. I'm not sure Volpex would have worked very well in the real world, but it any case it never got used.

Also at UH there was a professor from France, Marc Garbey, who used BOINC to do simulations of plant growth on a prairie. Marc arranged for me to be given an Adjunct Professor title at UH (though the last time I checked, I'm no longer listed there).

Travis Desell

Travis developed Milkyway@home while a grad student at RPI. He pioneered the use of volunteer computing for "genetic algorithms": in particular, making these asynchronous, so that one generation doesn't have to end before the next one begins. I was on his thesis committee and traveled to RPI for his defense. I was co-author on a few of his papers.

Travis graduated and got a job at U. North Dakota, where he continued to use BOINC as part of something called Citizen Science Grid.

Christian Beer and Rechenkraft.net

Christian was involved, along with Uwe Beckert and Michael Weber, in Rechenkraft.net, which promoted volunteer computing in Germany and ran a project called RNA World. He later was hired by Bruce Allen to work on Einstein@home.

Christian made lots of contributions to BOINC, especially to the system for translating text in the web code and Manager. He moved this system to Transifex, which worked out well. I appointed him to the Project Management Committee. He eventually left the BOINC world and resigned from the PMC.

Evgeny Ivashko and Natalia Nikitina

Evgeny and Natalia worked at the Russian Academy of Sciences, and devoted a big part of their careers to volunteer computing. I wish there were more CS academics like them. They ran several BOINC projects (mostly math-related), and wrote a number of research papers about various scheduling issues. They offered to host a BOINC workshop, which I'm sure would have been fantastic except that their location was remote, like a 5-hour train ride from the nearest airport.

Peter Hanappe

Peter worked at Sony Research in France. He did research in energy-efficient computing. It turns out that modern CPUs can go into low-power states, with reduced clock rate and voltages. Their efficiency (FLOPs per watt) is much better in these states. Peter studied this and gave talks at a couple of BOINC workshops around 2010.

It would be great if BOINC could, by default, compute in a low-power state. It would get a bit less computing done, but there would be significantly less energy usage, heat, and fan activity. The problem was that, as far was anyone could tell, there was no way for BOINC to put the CPU in a low-power state, or even to learn what the current power state was. We would have needed operating system support for this.

Nicolas Maire

Nicolas ran the malariacontrol.net project, which did epidemiological simulations and was based at the Swiss Tropical Institute in Basel. I visited him there once. Nicolas participated in Francois's "world tours" and in several of the BOINC workshops.

Ben Segal and CERN

In 2004, Francois Grey invited me to CERN to discuss BOINC. A project there, led by Eric Mcintosh, was already using their desktop PCs to run a program called Sixtrack that simulated the LHC particle collider. They started a BOINC project called LHC@home that ran this application.

I met Ben Segal, who had been involved in the development of the World-Wide Web. At that point, CERN was moving to virtual machine (VM) technology. They had a big project called CERNVM to make it possible to efficiently run CERN jobs in VMs. It seemed like BOINC fit in very well with this - we could run the CERN VM in home PCs, using VirtualBox. We'd develop a program called "vboxwrapper" to interface between the VM and the BOINC client.

Tensions developed around vboxwrapper. People from CERN (notably Daniel Gonzalez) developed a version of it. The official BOINC version - which Rom developed - was based on this but modified it considerably. There wasn't good communication, and the CERN people (justifiably, I'm sure) felt slighted. At some point Ben stopped communicating with me.

BOINC-related activity continued at CERN. They had a summer-intern program that brought in computer science students. They gave some of them BOINC-related projects The problem was that they didn't discuss these projects with me, and they often went in non-productive directions.

Eventually CERN's BOINC activity was put under the direction of Lawrence Field. Lawrence attended a couple of BOINC workshops, and we persuaded him to be in charge of server release management and developer Zoom calls. He's gotten more involved in the BOINC community. But CERN seems to be losing interest in BOINC. The last time I talked with Laurence he said that CERN IT was moving toward the use of GPUs - as if this was mutually exclusive with using BOINC.

Kamran Karimi

Kamran worked at DWave, a Canadian company that was trying to build a quantum computer. Kamran created and ran a BOINC project called AQUA that simulated the quantum computer. The project ran for several years, I don't think he got much support from DWave for this; he did it on his own. AQUA was the first project to deploy a multiprocess application in BOINC; I worked with Kamran to get this working.

Eric Korpela

Eric is an astrophysicist who likes computers. He designed and implemented big chunks of SETI@home. He had the idea of caching jobs in shared memory on the server, which is central to BOINC server performance. He did a lot of the work of converting SETI@home to BOINC - for example, writing the work generator, validator, and assimilator. He contributed a lot to the Unix build system for BOINC, based on the GNU tools (autoconf etc.). I invited him to join the Project Management Committee; he did, although he didn't get involved much.

Wenjing Wu and CAS

Francois Grey's efforts in China led to the creation of CAS@home. The idea was that CAS@home would host many applications, and would serve the computing needs of many Chinese scientists. We started with two applications: Lammps and TreeThreader

The main technical person there was Wenjing Wu. The project was formally led by CAS's director of IT, Gang Chen. I don't think he was committed to it. There were no apps or scientists beyond the first ones, and no promotion of the project to the Chinese public.

An aside on China: on the first trip to Beijing organized by Francois, I gave a public talk about BOINC. The questions afterward made it clear that the idea of donating something to support research for the good of humanity was not resonating with the audience. One guy stood up and delivered a long-winded rant about how to make money from BOINC. At the time I was nonplussed by this, but in retrospect I should have thought more about it.

Rytis Slatkevicius

Rytis was involved in BOINC as a programmer from 2006 to 2012. He made numerous contributions, mostly to the web code, especially the forums and the caching system. Later he joined Matt Blumberg and worked on his projects, including Charity Engine.

Vijay Pande and Folding@home

Folding@home got started at Stanford around the same time as SETI@home, and it was similar in many ways. I contacted its director, Vijay Pande, about the idea of it using BOINC. He was very friendly, and open to this idea. I visited them a couple of times. They decided to try an arrangement where, instead of using the BOINC server, they ran the F@h client as a BOINC app. This required some changes to the BOINC client, e.g. to tell the F@h client when it could communicate. But there wasn't really any upside for F@h in doing things that way, and the project was dropped.

A few years later I ran into Vijay at an NSF event, and we discussed this effort. He said his technical people thought BOINC was way too complicated - that "life is too short to use BOINC". They had a point.

Today, while BOINC is declining, F@h is holding steady. They've clearly done something right. We should have figured out what it was and copied it.

Marius Millea

Marius is an astrophysicist. As a grad student, he created the Cosmology@home project. He was a big champion of Docker, which makes it easy and efficient to run virtual machines.

He developed a system for packaging the BOINC server as a set of 3 communicating Docker containers (DB server, web server, and everything else). This eliminated a lot of the sysadmin-level complexity in setting up a BOINC project. It was a brilliant piece of work. But it didn't lead to the creation of new projects, as far as I know.

Peter Kacsuk and SZTAKI

Peter ran SZTAKI, a CS research institute in Budapest. They became interested in BOINC early on. I visited them, and urged them to not just do research, but create a Europe-wide umbrella project. They didn't do this, and instead concentrated on BOINC/Grid integration and other "plumbing" stuff, which I considered a bit mundane.

Peter had some really smart grad students, including Attila Marosi and Adam Kornafeld. We exchanged several visits, and we had lots of fun not related to BOINC.

Peter creating something he called the International Desktop Grid Forum (IDGF). People would pay a yearly fee to belong to IDGF, and in return they'd have access to improved documentation. I was leery of this - it seemed like they were trying to profit from my work, and I felt that if they were going to write documentation, they should make it freely available. But in retrospect, it wasn't a bad idea. You need money to be sustainable.

Peter was editor of the Journal of Grid Computing, a high-profile journal, and he invited me to join its board of editors, which I did.

Oded Nov

Oded was a professor in Human-Computer Interaction at NYU. He was interested in people's motivations for Internet-based volunteer activities like writing Wikipedia articles and contributing to open-source software projects. In 2011 he approached me about doing a study of BOINC volunteers.

I'd already - in 2006 - done such a poll, but he wanted more detail, and to also record things like how much computing each person had done, how many message board posts, and so on. I implemented this for him, and he made me co-author on six resulting papers.

However, we didn't make any changes to BOINC - its UI or its incentive mechanisms - based on the results of Nov's work. We should have.

David Toth

David was a grad student at Worcester Polytechnic Insitute. He and his advisor, David Finkel, wrote several papers about scheduling issues in BOINC. He was also interested in the issue of volunteer motivation - how best to get people to run BOINC. As a professor at Merrimack College, he did an interesting project: he took 3 groups of subjects - students at the college - and presented each group with a different description of BOINC: one stressing science, one stressing credit and competition, one stressing community. He then polled them on their reaction. Essentially, he found that the three approaches were about equally effective - roughly 5% of people said they'd definitely run BOINC, and 10-15% said they might give it a try.

Toth's work - and that of Oded Nov - was potentially really important. In order to grow the volunteer base a lot - say, tens of millions - we needed to do massive PR, and we needed to know what message this PR should convey. Unfortunately, none of this ever happened.

Mark Silberstein

Mark created a project called Superlink@Technion, based at Technion in Israel. This was part of a larger system that involved many types of computing resources: the user's computer, a local cluster, Condor pools at several institutions, and the Amazon cloud. A meta-scheduler decided where to send jobs, based on the job characteristics, the current queueing delays at the various resources, the batch completion deadlines, and so on.

Mark is a super-smart guy with lots of forward-looking ideas; I wish he'd been more closely involved in BOINC.

Janus Kristensen

Janus was Danish; I don't remember how he came to BOINC. He was a key contributor from 2004 to 2009, mostly in the web code, and he did great work. He added a mechanism to cache web page contents, which we used lots of places (e.g. leader boards) to reduce server load. He invented the cross-project ID scheme. He added tons of features to the message board code, including moderation. He wrote the original language translation system.

Moderators: Jord, Kathryn, Richard

We had already learned, in SETI@home, that message boards are extremely important. They provide a technical support channel: experienced volunteers can answer questions for beginners. And they provide an important sense of community.

However, left to their own devices, Internet message boards devolve into a cesspool of spam, flame wars, and hate.

Several people served as "moderators" for the BOINC message boards: most notably Jord van der Elst, Kathryn Marks, and Richard Haselgrove. We implemented lots of features to support them: the ability to suspend people for various periods, to move messages between forums, and so on.

The moderators had two critical roles:

- To keep the message boards from devolving as described above.

- To relay important information (e.g. bug reports or good feature requests) to me and the other developers.

Vitalii Koshura

Vitalii is a Ukrainian living in Germany. He appeared from nowhere in 2018 and became a very prolific and productive developer. He has a full-time job, but his contributions to BOINC seem like a full-time effort too.

He got interested in the Android version, and completely reimplemented it in Kotlin, the language that replaced Java for Android GUIs. He did massive work on the Github-based CI system.

More recently, he's been working with me on the App Library / BOINC Central concept. He ported Autodock to BOINC (and got his changes accepted in the Autodock repo) and made versions for lots of platforms. He's currently working on a "work generator" for Autodock, which takes JSON databases of proteins and molecules and creates batches of jobs. This will be the first app we support in BOINC Central.

Software: part 1

The software evolved continuously from 2002 until today. A fairly complete description of it is here. Some comments on the first few years of development:

The good

A central idea in the design of BOINC is to divide things up into separate pieces that communicate - possibly remotely - through well-defined interfaces. Examples:

- The client (which fetches are runs jobs) is separate from the GUI (the Manager). They communicate via network RPCs. This means you can control clients remotely, and you can write a new GUI if you want (and people did, such as BoincView, BoincTasks, and Fresco).

- Apps are separate from their graphics programs; they communicate through shared memory. The BOINC screensaver is a separate process that runs these graphics programs as needed.

- A BOINC server consists of many pieces - work generators, scheduler, assimilators, validators - that communicate through a SQL database. Each of these pieces can be distributed and/or replicated, allowing server capacity to scale as needed.

- BOINC projects are independent, and they provide RPC interfaces for things like creating accounts and getting credit data.

This approach worked out well. Some other important parts of the design:

- The distinction between app (abstract) and app version (executable for a particular platform) and the idea that a job is submitted to an app, not an app version.

- The "anonymous platform" mechanism, which lets users supply their own app versions. This made it easy for volunteers to create versions of SETI@home for GPUs and platforms that SETI@home itself didn't have access to.

- The wrapper mechanism, which let projects run existing executables, not linked to the BOINC library.

- The data architecture, which combines relational DB and XML; we stored complex stuff (like job descriptions) as XMl in SQL text fields.

- We used HTTP for all network communication; scheduler RPCs are HTTP POSTs. This avoided potential problems with firewalls and proxy servers.

- Computing "credit" was a big deal for volunteers. Since jobs come in all sizes, the unit of credit was not a job but a certain number of floating-point operations; we called this a "Cobblestone" (after Jeff Cobb). To prevent "credit cheating" we used job replication: run each job on two different computers and make sure the results match. Later we refined this to reduce the amount of replication.

- I realized we needed to provide and emphasize total credit across projects; otherwise volunteers would lock into single projects and never try new ones. We added a notion of "cross-project ID" for users and hosts. This linked accounts with the same email address without exposing the email address. Projects exported their credit data in XML files. This enabled "stats sites" that collated and displayed this data, at the level of computer, user, and team. Examples include BoincStats by Willy de Zutter and BOINC Combined Statistics by James Drews.

- We made all user-visible text (in the GUI, the project web code, and the BOINC web site) translatable. Volunteers did translations into about 20 (non-English) languages. We wanted to make volunteers from around the world feel welcome.

Build or buy?

This refers to the choice between using someone else's software or writing your own. There are typically advantages each way, and it can be a hard decision. This came up several times in BOINC.

For example, there was an open-source message-board system called phpBB, which started about the same time as BOINC. BOINC probably could have used it, but I decided to implement our own. This turned out to be way more work than I thought. On other hand, phpBB turned out to have security vulnerabilities. (Vulnerabilities in BOINC's web code popped up a few times, but none was exploited as far as I know).

I used an XML-like format for state files. But it wasn't exactly XML; e.g., white space mattered. As time went on it became clear that, for interoperability, we needed to fix this. There were some XML libraries that I could have used, but they didn't parse directly to C++ structures, so I implemented my own "standards compliant" XML parser, and it took me a while to get it right. This decision was unpopular with some people.

The free market model

An early and fundamental decision was that projects should be as independent as possible. Each one has to:

- Create and maintain its own server, and develop its own web site.

- Develop its applications and make versions of them for various platforms (Win, Mac, Linux).

- Figure out how to attract and retain volunteers.

Projects would compete for volunteers, so they'd have an incentive to make good web sites, to do PR, to participate in their message boards, and so on.

The role of the BOINC project would be as small as possible; we'd maintain and distribute the software, we'd "vet" projects, and the BOINC server would distribute a list of vetted projects.

This "free market model" seemed like the obvious choice. It encouraged projects to do PR, as I wanted. It allowed the ecosystem to develop in whatever direction it wanted. And it minimized what I had to develop and maintain.

But, surprisingly, it turned out not to work as I intended, and in fact it severely stunted the growth of BOINC. See the Science United section below.

The bad and the ugly

The UI/UX got off to a rocky start. The interface looked like a spreadsheet, with lots of technical info that was irrelevant and intimidating to most users. To add a project, you had to type its URL. We eventually fixed this by distributing a list of vetted projects and providing a menu to select them. But we had made a bad first impression.

There were a couple of reasons for this. None of us (me, Rom, Charlie, others) had much intuition about UI design, especially for non-technical users. We really didn't know our target audience. The volunteers we interacted with - those who participated on the message boards and posted to the email lists - were mostly "power users" - computer geeks who wanted lots of information and lots of knobs to adjust.

Documentation - both for volunteers and for scientists - was a problem. I was cranking out server-side features at a tremendous rate. I wrote documents, but they tended to mix usage and implementation. They were full of ugly XML. These documents accumulated like a landfill, poorly organized and indexed. Eventually I made a single flat index, which was OK as a reference, but not as a tutorial.

Again, the problem was not knowing my audience: I was writing for BOINC experts, not for a scientist with limited computer skills, evaluating BOINC or trying to get a project working.

Software, part 2: Quality assurance

Quality assurance (QA) has been a challenge in BOINC.

Client and Alpha Test

A computer running BOINC has various properties:

- The hardware (CPU, GPU, RAM size, etc.)

- The software (OS version, video driver, security software, VBox version, etc.)

- The set of BOINC projects it's attached to.

- The settings of BOINC's dozens of configuration options.

You can think of these factors as forming a huge, high-dimensional parameter space. A bug - a crash, or something not working - might happen only at a particular point in this space. We (me, Rom, Charlie) had only a few computers, so we were able to directly test only a tiny fraction of the parameter space.

The main idea of a software release is that it should be as bug-free as possible. You do this by freezing development, testing, fixing bugs, testing again, then doing the release.

At first, our testing was cursory. We'd run the new version on our own computers for a day or two. If it worked, we'd announce it on an email list. If no one had reported any show-stopper bugs after a couple of weeks, we'd release it to the public.

This didn't work; in several cases show-stopper bugs surfaced after the public release. Absence of bug reports doesn't mean absence of bugs. Often the bugs were in use cases - such as a first-time install of BOINC - that no one had looked at.

In 2004 I realized that we needed to test more systematically.

- We needed lots of volunteer testers - dozens or hundreds. This wouldn't completely cover the parameter space, but it would cover a lot more of it than we could.

- These testers would be asked to test not only their typical use of BOINC, but a lot of specific tests, such as a clean install, adding/removing projects, using various config parameters, etc. I made a list such tests, about a dozen of them.

- Testers would be asked to report the results of these tests, positive or negative, using a web interface. This would produce a "test matrix" where the rows were tests and the columns were types of computers. When this matrix was completely filled in with positive results, the version could be released to the public.

The Client Emulator

A broad class of bugs have to do with the client's job scheduling and work fetch algorithms. People would email me complaining that a CPU core was idle, or that the client was fetching too many jobs.

Debugging a problem on a computer you don't have access to is hard. I'd try to guess what the problem was, make a change, send the user a new client executable, they'd send me back the new log files, and this would go back and forth over a week or two. Inefficient.

I came up with a system where I could reproduce the user's problem - in a simulated environment - on my own computer, where I could debug the problem efficiently. This used a web interface: the user uploads their XML state files (client state, config, prefs, etc.). Then a simulator - actually an "emulator", since it is based on the real client code - simulates things (job execution, work fetch, etc.) starting from that point. It produces a graphical output showing device usage, queue lengths, etc.

Implementing this required a careful refactoring of the client code - e.g. so that I could replace actual job execution with simulated execution. It was a lot of work, but it really paid off. I've used it to figure out and fix dozens of gnarly problems in the client. It's my favorite part of BOINC, I think.

Server

Testing the server software was even harder. It encompasses a lot of stuff: the scheduler and other job-handling programs, the PHP web code, the runtime library API, the wrappers, and the Python control scripts. Each of these has zillions of features. Many of these features were implemented for a particular project (e.g. trickle messages for CPDN and locality scheduling for Einstein), and it was infeasible for us (me/Rom/Charlie) to test them ourselves.

I encouraged BOINC projects to operate a separate "beta" project, with a few volunteers attached to it, that they could use to test new versions of the BOINC software, as well as new versions of their own applications. We did this in SETI@home; I think Rosetta and Einstein also had beta projects.

However, most projects didn't have beta projects. And most projects had - against my strong and repeated advice - modified the server code, making it hard for them to upgrade to the current code.

So the only way I could test changes to the server code was to deploy them on the SETI@home beta test project. (For web code, I usually deployed directly to the main project, since that got a lot more use.) But this tested only the features that SETI@home used, it didn't test them systematically, and it didn't test things that took a long time to happen (like a job timing out after 2 weeks).

Since there was no way to do thorough and systematic testing, we couldn't do releases in the usual sense. Instead, I'd just check changes into the repository, deploy them on SETI@home beta (or ask another project to test them, if it was a feature for that project). If something didn't work I'd fix it immediately. Thus, if the code hadn't changed in a week or two, it probably didn't have any show-stoppers.

But if projects checked out the code soon after a change, it could have a show-stopper; this bit Einstein a couple of times, and it pissed them off. At one of the workshops, Oliver Bock gave a vitriolic diatribe about server release management; he called our approach amateurish (but didn't suggest a viable alternative).

In ~2018, the PMC decided we needed to do release management of the server code, and they appointed Lawrence Field to direct this. There were one or two "releases". But since they didn't involve systematic testing, they weren't meaningful.

Automated testing

Starting around 2016 there was a lot of activity around "automated build and test", AKA "continuous integration". After each PR request, automated tools on Github (e.g. Travis CI) kick in and build the software for the major platforms. There was a related effort to add "unit tests", which would also be run automatically, seeing if anything had broken.

A lot of people thought that this would solve our QA issues - that master - by definition - would always be bug-free, and releasable to the public. There was a sort of religious zealotry behind this.

This belief was misguided. People wrote unit tests for trivial stuff like string manipulation. Things like this aren't where the bugs in BOINC are. The bugs are in the interactions of large, complex pieces of software, and relational databases, and network communication. Out of the thousands of bugs that I've fixed in BOINC, I can't think of a single one that would have been found by a conventional unit test.

Which is not to say that automated testing of BOINC is impossible. One can imagine a framework for end-to-end testing: that automatically creates a BOINC projects, installs certain app versions in it, deploys some clients, creates some jobs, and then checks that DB contents and output files are correct (by some definition) after the dust settles.

In fact, I had began implementing such a system, in PHP, in 2005 or so. I realized it was too hard - I'd probably spend more time debugging the system than debugging BOINC. But if someone were to complete such a system it would enable meaningful automated testing, and it could provide a basis for server release management.

The Github-based automated build system has been fairly useful - it finds compile errors and creates executables that people can download. But a huge amount of effort went into it. The same effort could have gone toward something - like the BOINC App Library and BOINC Central - with broader benefit. I guess this is an aspect of open-source software projects. Volunteers tend to work on things they like, rather than the things that benefit the project the most.

Volunteer energy

Early in SETI@home, I became aware that the volunteer population - the people supplying computing power - could do many other things as well. Lots of them - thousands, tens of thousands - had all sorts of skills, and were eager to help if we gave them a way to do so.

I started to think of my role as encompassing social engineering as well as software engineering. I tried to identify the ways in which volunteers could help, then create the communication channels and organizational structures that would let them do so. These mechanisms had to be scalable in terms of management; we didn't have much time to manage anyone, so we needed systems that were either self-managing, or where we could create a hierarchy in which experienced volunteers would manage less-experienced volunteers.

Some of these efforts were fairly successful:

- Message boards, on both the BOINC web site and on project web sites. Technical support was mostly done through the message boards. There was a core group of people who were on the message boards every day, answering questions for new volunteers. I added a fancy feature where you could see if a question had already been answered, creating a sort of self-organizing FAQ (like Stack Overflow). But no one used this.

- The Alpha test system described above.

- The language translation system; we've always had about 15 fairly complete translations.

- We had set things up so that projects export credit data. People used this for all sorts of purposes. They created sites (like BoincStats) that show credit across projects, and how it changes over time. They used it to create competitions for who could do the most computing for a given project in a given time period. They created a cryptocurrency (Gridcoin) based on credit.

- I added the notion of "team" to the web code, and it was wildly successful. People created teams for countries, companies, universities, types of computers, and so on. We set things up so that the credit-statistics sites could show team data - i.e. teams could compete. I liked this because it created an incentive for team members to recruit new users - their friends and family - to their team, and hence to BOINC. We added all sorts of Web features to support teams, like the ability for teams to have their own message boards, and to transfer "foundership" of teams in case the founder disappeared.

- We created email lists for projects, developers, translators, and team leaders.

- It seemed to me that real-time conversation was better than message boards for providing technical support. So I made a help system, based on Skype, where experienced volunteers can sign up to be helpers. New volunteers can find helpers who speak their language, then talk to them on Skype. I thought this was extremely cool - that there would a self-organizing customer-support network, with calls going on all over the world, 24/7. It worked, but was not as widely used as I hoped.

Other efforts were mostly unsuccessful:

- I had the idea of using volunteers to do PR. I made a wiki page with a list of magazines and web sites that had something do with with computing and/or science. Volunteers were encouraged to post on these, write letters pitching stories on BOINC, etc. These efforts would be coordinated through the wiki page. But no one ever did any of this.

- I thought that if documentation were on a wiki, good documentation would magically appear. This was not the case. At the beginning, a guy named Paul Buck started writing in the volunteer-facing wiki. His writing was rambling, full of extraneous information and folksy "humor". When I told him that the documents needed to be short and to the point, he left in a huff. Between bad writing and spam, the Wikis became more of a liability than a strength. At some point I made them read-only to the public.

Bossa

In 2005 I got involved in Stardust@home, a project run by Andrew Westphal at Space Sciences Lab. Stardust used Internet volunteers to look at microphotographs of a chunk of Aerogel that had collected interstellar dust particles in space. Humans - at least at that time - could do better than computers at finding the particles.

Someone called this "distributed thinking". There were lots of analogies with BOINC: jobs, redundancy-based validation, credit. Also the volunteer populations - science enthusiasts - presumably overlapped a lot.

Through Andrew, I got involved in a project led by Tim White to use distributed thinking to look for homonid fossils in close-up photographs of the ground in the Olduvai Gorge. This never went anywhere, but it inspired me to develop a software platform for distributed thinking, called Bossa.

Around that time, the Rosetta people developed an app called Foldit! that let people fold proteins, trying to find the configuration with minimal energy. I flew up to Seattle to try to get them interested in Bossa. I failed: they were starting their own ambitious project for the "game-ification of science" or something.

And in fact Bossa was never used by anyone. Daniel Gonzalez developed a similar platform in Python, PyBossa, which got used for a few things. (I was miffed that he didn't include me in this.) Also, Amazon developed the "Mechanical Turk" around the same time.

BOLT

But what really got me excited (and still does) was education. BOINC gave us a giant pool of people interested in science. What if we try to teach them about the science they're helping - about electron orbitals, binding energies, simulated annealing, the magnetosphere, Doppler shift, and so on?

The students - BOINC volunteers - would have diverse demographics and backgrounds. And people have different preferred "learning modes". So to teach them effectively, you'd want to develop lots of different lessons for each concept, explaining it in different ways, in different media. Then you could do experiments to see which lesson works best for each type of student. Because of the constant stream of new students, you could be doing these experiments constantly (in traditional education, you can basically do one experiment per semester or year). The course could continuously evolve and improve, and it would adapt to each student.

I developed a software platform, Bolt, for doing this - for developing structured courses with lots of lesson alternatives, a database that records every interaction (even how many seconds a student looks at a page) and a way to conduct experiments.

I contacted people in the UC Berkeley education department, and located a researcher, Nathaniel Titterton, who was interested in Bolt, and we wrote a grant proposal to the education wing of NSF. It was a pretty good proposal; Rosetta@home agreed to act as a test case. But near the deadline I learned that NSF requires education projects to have an outside auditing agency verify their claimed results (apparently there's a certain amount of bogosity in the field) and this has to be lined up before you submit the proposal. I quickly looked around; the Lawrence Hall of Science had some people who were qualified to do this. But it was too rushed, and no one seemed to be as excited about it as I was. I never submitted the proposal. And Bolt never got used for anything (except my demo course on conifer identification).

BOINC Workshops

In 2005, Francois Grey proposed holding a "Pan-Galactic BOINC Workshop" at CERN,

bringing together everyone using and developing BOINC

("pan-galactic" referring to SETI@home and/or Douglas Adams).

This happened, it was a big success,

and BOINC workshops became a yearly event.

Group photo from the 2006 workshop

The workshops were usually 4 days. The first 2 days were talks, then there were "birds of a feather" sessions, or "hackfests" of various sorts, and we ended with a couple of hours of free-form discussion.

The workshops were extremely valuable to me for several reasons:

- I learned what people needed. I came away from each workshop with a "to-do" list that kept me busy for the next several months. This was great for my enthusiasm and morale.

- We'd hear about really wild proposed uses of BOINC. E.g. there was a young man who did base-jumping from mountain tops, and he had plans for a BOINC project to do high-resolution projections of wind conditions.

- I was able to meet face-to-face with the leaders of all the projects. This was far more effective than email. Andy Bowery from CPDN always had dozens of questions for me. He'd write down the answers on a wad of Post-It notes; often the same questions would reappear the next year.

It happened that almost everyone involved in BOINC was a hiker, and some of us (me, Francois, David Kim) were pretty serious mountain climbers. So it became a tradition that the day after the workshop we'd go on long-ish hike in whatever mountains were nearby.

Hiking up Montserrat near Barcelona, 2009

A list of the workshops is here. For me, some of the more memorable ones were:

- 2007, Geneva. It was held in a beautiful chateau in the north of town. There was an wonderful feeling of camaraderie. On the last evening, a group of us walked all around Geneva. One by one people reluctantly returned to their hotels to prepare for early-morning flights. No one wanted it to end.

- 2009, Barcelona. It was held in a restored monastery of some sort. The post-workshop hike was at Montserrat, an amazing rock formation 30 miles away.

- 2011, Hanover. We met at Bruce's Max Planck Institute. The hike was at the Brocken in the Harz mountains.

- 2014, Budapest. I stuck around for the Palinka and Sausage festival.

- 2017, Paris: It was held in the Paris Observatory, where Marius Millea was working. We toured the catacombs afterward.

Up until 2014, I organized the workshops myself, in an informal way: to register, people just sent me an email with the title of their talk, and I put together the schedule. I'd begin each workshop with a "state of BOINC" talk. After 2014, the PMC took over, and assigned the role of organizer to other people. Registration became more complex. Also, I generally wasn't asked to give a keynote talk, or to have any other particular role. In a couple of cases I insisted on giving the talk; in 2019, pissed off about things, I didn't attend the workshop. This didn't elicit much comment.

Software, part 3

Comments on software development from ~2010 onward:

- Much of the work involved supporting apps that used GPUs and/or multiple CPU cores. On the client side, this involved generalizing the scheduler and work fetch mechanisms, to keep track of these "computing resources" separately. Scheduler RPCs now could request particular amounts (measured in time) for each resource.

- On the server side, we needed a way to describe the requirements of each app version (e.g., what kind of GPU it needed, what range of driver versions, how much GPU RAM, and so on), in order to decide whether that app version could run on a particular host. I did this in a very powerful and general way called the plan class mechanism. The requirements could be expressed in XML, or by a C++ function.

- These new types of apps required generalizing the credit mechanism. Now that an app could have both CPU and GPU versions, we needed a way to assign credit that was "device neutral": a given job would get roughly the same credit no matter where or how it was computed. I came up with a way of doing this that came to be known as CreditNew, the name of the wiki page describing it. CreditNew is complicated and I'm not sure it ever worked exactly as I intended. It was wildly unpopular with people who obsessed about credit. But no one ever found any specific problem with it, or had any better ideas.

- We added support for apps that run VirtualBox virtual machines, using an "adapter" app called vboxwrapper. This eliminated the need to build app versions for Windows or Mac. But it didn't work with GPU apps. It was used by CERN and Cosmology@home, and it generally worked OK, though VMs seemed to use a lot of RAM and caused performance problems on many machines.

- We developed a client for Android. This turned out to be easier than I thought - Android is based on Linux. The client, compiled for ARM, ran with almost no changes. We had to develop a new GUI. We found a guy named Joachim Fritzsch to do this, and he did an excellent job. Bruce Allen paid him.

- To support nanoHUB and Condor/BOINC, we added web RPCs that let you efficiently submit batches (maybe thousands) of jobs, monitor their progress, and fetch their results. We also added a system where a project could have multiple job submitters, and it would prioritize jobs so that each job submitter would get a given share of the computing resource. This was extremely cool, but AFAIK it was never used.

- In conjunction with this, we added a "remote file management" system for efficiently moving job input files to the server. This used MD5 hashes to avoid file duplication, and it could garbage-collect files no longer in use.

- Mark McAndrews gave me some money to investigate "Volunteer Data Archival": using disk space on volunteer computers to store large data. Since computers are sporadically available, this isn't useful for online access; hence the "archival". The hard is part is that volunteer computers can disappear forever, unpredictably. So you have to store data in a way that tolerates this up to some level. You could replicate the whole file a bunch of times, but that would use a lot of space. "Reed-Solomon" codes are better - they break the data up into M pieces, and you can tolerate the loss of N copies. The problem then is that you have to reconstruct the data in its entirety, on a server, which sort of defeats the purpose. So I came up with an extension of this called "multi-level coding" where you only reconstruct small parts of the data. Some very tricky algorithms are involved. This never got used, and I never bothered to publish it.

- Using computers to store data introduces the possibility that multiple projects want to do it, and you have to divide up the free space on each volunteer computer in a reasonable way, and notify projects when they need to free up space. I came up with ways to do this. So BOINC is now fully equipped to serve as a platform for data-intensive as well as compute-intensive applications. So far there haven't been any.

- I revised the PHP web code to use Bootstrap, which uses CSS to control web site appearance instead of baking it into the code. This means that projects can customize their web sites by picking a Bootstrap "theme". I used this to update the SETI@home web site, and to make it dark (light text, dark background). This produced a lot of outrage. I tweaked the colors, and most people are happy now.

BOINC and UC Berkeley

BOINC is based at UC Berkeley, but it's not exactly a close relationship.

The university has a PR office, which helps researchers write press releases and get media coverage. Bob Sanders was the person who handled science. Bob was helpful in promoting SETI@home. I tried to get Bob and the PR office to promote BOINC, but for some reason they were never interested.

For a while I pushed the idea of a virtual campus computing center: a university could set up a BOINC project, attach the PCs in their computer labs to it, promote it to their students and alumni, and make the computing power available to their researchers. This would be a great way increase both science usage and volunteership. Only one university (U. of Westminster) did this, and only for a short period.

I tried to get UC Berkeley interested in this idea, and I had a couple of meetings with their campus IT people. There was initial interest in this, but it never went anywhere. IT people are (justifiably) very security-conscious, and generally veto things that have nonzero risk and no clear reward.

I made efforts to get the UC Berkeley Computer Science department interested in BOINC. For several years, I sent an email to the CS professors who were interested in distributed computing, listing a bunch of possible MS and PhD thesis topics arising from BOINC, and seeing if they had grad students potentially interested in these. I never received a reply to any of these emails.

I did, however, get some interest from Jim Demmel and Katherine Yelick (CS professors in the scientific computing area). They thought BOINC was interesting, and they invited me to give talks on it in their graduate seminars.

In 2001 I was contacted by Scientific American, with the idea of co-writing a paper with Jon Kubiatowicz, a CS prof who had a research project called Oceanstore that involved distributed storage. I met with him a couple of times; he was self-important and talked down to me (Oceanstore, BTW, generated some papers but little else). Anyway, we wrote a crappy paper that was made even crappier by the Scientific American editors. Embarrassing.

Academic computer science

BOINC tried to do something very hard: take a lot of untrusted, unreliable, and diverse computers, and make them into a (more or less) reliable, predictable, and easy to use computing resource. This led to a lot of hard research-type problems.

As we solved these problems, I didn't want to just implement the solutions - I wanted to write papers about them, to get the academic CS community interested in BOINC, so that even smarter people could find even better solutions. I ended up being author or co-author on 53 such papers.

There were pockets of academic interest in volunteer computing: the various people I mentioned above (Michela Taufer, David Toth, Derrick Kondo, Arnaud Legrand, Marc Garbey, Jaspal Subhlok, and others), Carlos Varela at RPI (because of Travis Desell). Frank Cappello at U. of Paris - Sud (because of Gilles Fedak and Xtremeweb).

In the first couple of years of BOINC, the broader academic Computer Science world showed a lukewarm interest in BOINC and volunteer computing. At the 2006 IPDPS (a high-profile distributed-systems conference) there was a session devoted to volunteer computing.